Founding Engineer (Europe)

AI / Enterprise Software · Full-time · Remote

Europe

Engineering

Full description

About Nimbus

Generic chatbots are a dime a dozen; we’re building the brain behind the machine.

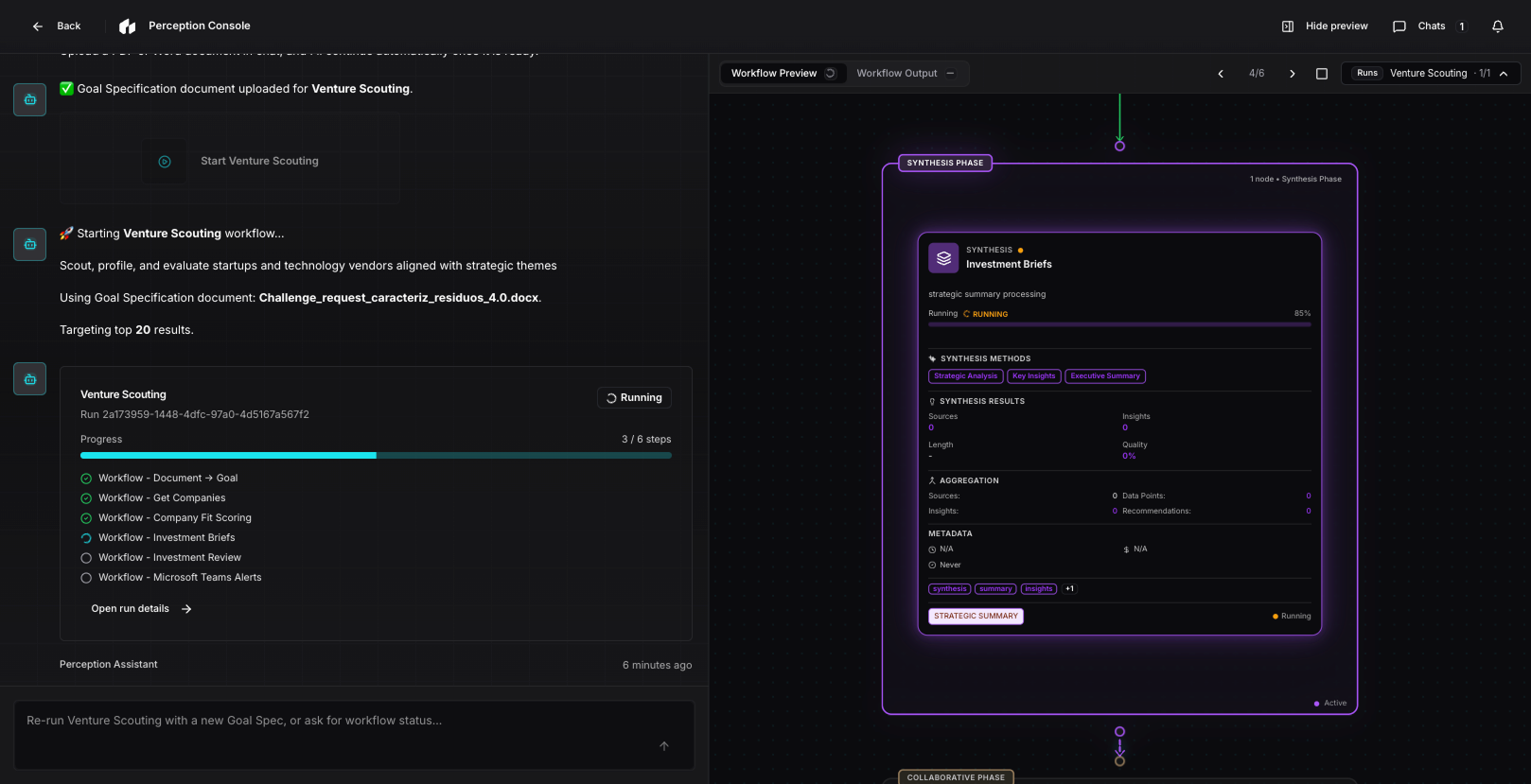

Nimbus is an advanced, modular AI intelligence platform designed to move beyond "chat" and into the realm of complex multi-agent orchestration. We’ve built a sophisticated ecosystem, merging a Serverless Workflow Engine (Mastra AI) with a dynamic Client Context Ontology (Neo4j), to turn raw data into autonomous decision intelligence.

At Nimbus, we don’t just provide answers; we provide a collaborative workspace where humans and AI agents solve high-stakes enterprise problems through traceable, beautiful, and deeply intuitive visualisations. We are building the future of how work actually gets done.

The Role

We’re looking for a Founding Engineer who treats code as a craft and architecture as an art form—and who will hold the AI stack to the same standard as the rest of the product.

This isn't just about "building features", it’s about pioneering the end-to-end experience of a platform that lives at the edge of AI capability. You won’t only connect APIs; you’ll be expected to own substantial vertical slices of the codebase—from GraphQL and event-driven services through to the Vue workspace—while treating model quality, evaluation, and failure modes as first-class engineering problems. In practice, you’ll be:

- Architecting the Core: Building resilient, event-driven services on GCP that power our agentic workflows.

- Defining the Language: Designing robust schema contracts that allow agents and humans to communicate seamlessly—and that stay stable as models and tools evolve.

- Raising the Bar on AI/ML: Going beyond prompt tweaks—ownership of retrieval quality, eval sets, regression checks for agent behaviour, and (where the product requires it) training / fine-tuning and deployment discipline for models and embeddings.

- Crafting the Visual Frontier: Building a "high-craft" frontend using Vue 3 and VueFlow. You’ll be responsible for making complex graph- and canvas-heavy workflows feel fluid, responsive, and—let’s face it—magical.

- Bridging Logic & Interface: Implementing how task agents interact with Knowledge Graphs and RAG systems, ensuring that even the most complex AI "thought process" is transparent and intuitive for the user.

If you’re bored of standard CRUD apps and want to build the infrastructure for the next generation of autonomous intelligence—with real accountability for model behaviour in production—let’s talk.

Key Responsibilities

- Platform & ownership: Own and evolve large parts of the stack (frontend and backend), not narrow tickets—workflow catalog, runtime integration, workspace UX, and the services that connect them.

- Platform feature engineering: Develop and maintain the Workflow and Perception Engines, and continual learning touchpoints as they land in the codebase, including observability and operational clarity for agent runs.

- AI implementation & critique: Partner on agent tools, causal / graph-grounded interactions, and workflow node patterns; debug agent loops (tools, retries, grounding failures) end-to-end with a sceptical eye toward hallucination, leakage, and cost.

- Evals & model lifecycle: Define and run evaluation harnesses (automated and human-in-the-loop where needed), track regressions when prompts/models/retrieval change, and contribute to decisions on when to fine-tune, swap embeddings, or restructure RAG—not static one-off demos.

- Retrieval and vectors: Design and operate embedding + chunking pipelines, vector store usage (e.g. per-tenant collections), metadata for retrieval, and hybrid strategies: vector similarity + keyword + graph expansion where the product requires it.

- API & schema design: Design and implement clear contracts between GCP backend (Cloud Run, Pub/Sub, Firestore) and the workspace frontend (GraphQL) where Neo4j / GraphQL surfaces apply.

- Frontend excellence: Build the collaborative workspace with Vue 3 and VueFlow—complex state, long-lived sessions, enterprise-appropriate data density (tables, charts, boards) without sacrificing clarity; performance on large graphs and lists (virtualisation where needed); accessibility as a default.

- Data pipelines: Build and optimise systems that capture granular decision traces and usage signals (e.g. BigQuery) so product and models improve from real human–agent interactions, not guesswork.

Technical Requirements

- Experience: 5+ years of professional full-stack development, ideally in technical B2B SaaS or API-first environments—with evidence of owning multi-surface features (services + client), not single-layer work.

- Backend expertise: Deep understanding of modern cloud architectures: microservices, serverless compute, and event-driven systems using Pub/Sub or similar message buses.

- Frontend proficiency: Strong experience with Vue 3 (or comparable) and complex interactive flows (e.g. VueFlow); comfortable leading UI architecture where state and performance matter.

- Database knowledge: Practical experience with NoSQL (Firestore) and graph databases (Neo4j).

- AI/ML depth: Hands-on shipping of LLMs, agents, tool calling, RAG, and prompt workflows in production—plus a track record of evaluation: defining metrics, test sets, regression gates, and explainable failure analysis—not only integrating an API. We will expect real depth in conversation and code review.

- Model lifecycle literacy: Familiarity with tradeoffs for embedding models and vector stores; ability to work with or own pieces of training / fine-tuning or adapter workflows when product requirements go beyond off-the-shelf inference.

Nice to Have

- Experience with AI orchestration frameworks (e.g. Mastra AI, LangChain, LlamaIndex).

- Familiarity with LLM/ML observability and eval tooling (e.g. Langfuse, Weights & Biases, custom harnesses).

- Data orchestration tools (e.g. Restate) or complex analytics in BigQuery.

- Direct experience in Google Cloud Platform (GCP).

- Background in data science, applied ML, or AI research.